Abstract

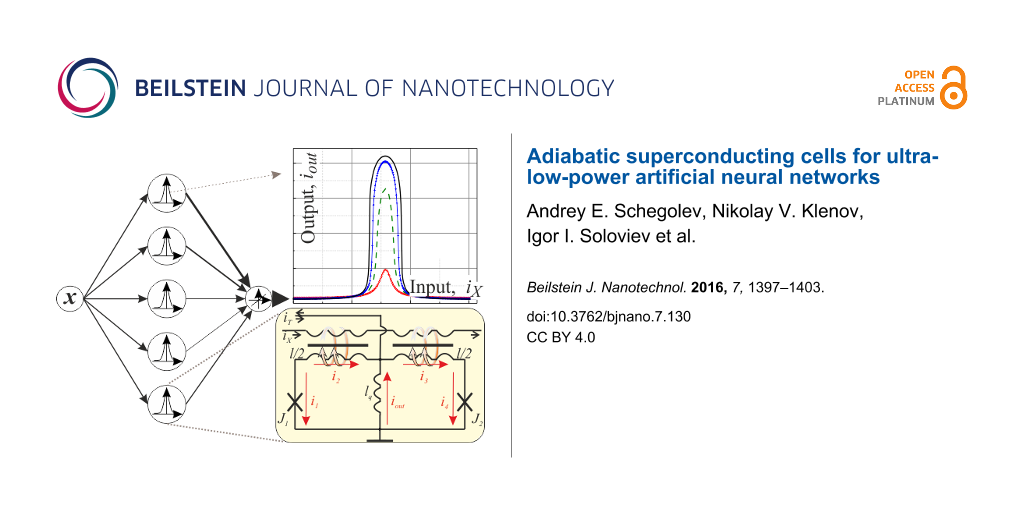

We propose the concept of using superconducting quantum interferometers for the implementation of neural network algorithms with extremely low power dissipation. These adiabatic elements are Josephson cells with sigmoid- and Gaussian-like activation functions. We optimize their parameters for application in three-layer perceptron and radial basis function networks.

Findings

Artificial neural networks (ANNs) are famous for their application in the fields of artificial intelligence and machine learning [1]. The future of cellular and satellite communications, radar systems, deep sea and space exploration will likely be closely related to the capability of ANNs to provide effective solutions to problems such as classification and recognition of signals or images [2-6]. The important features of receiving systems exploited in such areas are high energy efficiency, sensitivity and variability in signal processing. This makes the utilization of superconducting electronic constituents a natural choice.

Superconducting digital receiving and computing are emerging technologies in high-speed/high-frequency electronic applications markets [7]. The advantages of a superconducting digital RF receiver [8] are high sampling rate and quantum precision of quantization, allowing direct digitization of incoming wideband RF signals without conventional channelization and downconversion. The combination of such receivers with highly sensitive, tunable, active, superconducting antennas [8-11] and ANNs provides an opportunity for the development of a cognitive radio correlation receiver. Unfortunately, among superconducting ANNs [12,13], those for signal classification and recognition are less developed.

A solution for the recognition problem by employing perceptron ANNs was sought in earlier works with SQUID-based neuron switching [14,15] in the resistive state. In subsequent variations [16,17], this feature was found to drastically reduce the energy efficiency of the superconducting circuit. In another recent approach to multilayer perceptron, SQUIDs were utilized as nonlinear magnetic flux transducers, allowing the ANN to persist in the superconducting state [18]. The implemented neuron scheme is quite analogous to the quantum flux parametron (QFP) [19,20] – the basic cell of a superconducting logic circuit, known for their high energy efficiency. It was experimentally shown that QFP-based circuits operated in the adiabatic regime can outperform their semiconductor counterparts with respect to energy efficiency by seven orders of magnitude (including the power required for superconducting circuit cooling) [21-24]. While the activation function of the QFP neuron was not analyzed in [18], our assay shows that it is not well suited for the chosen type of network.

The activation function commonly has a highly nonlinear form and is a key characteristic of a neuron. Note that semiconductor-based neurons contain at least approximately 20 transistors due to the lack of nonlinearity between the transistor current and voltage. The typical implementation of an ANN is based on field-programmable gate arrays (FPGAs), making them relatively slow and hardware/power consumable. The basic element of a superconducting circuit is the nonlinear Josephson junction, which is about three orders faster than a conventional transistor. In contrast to semiconductor neurons, the superconducting one typically consists of just a few (two or three) Josephson junctions. This presents a distinct opportunity for the development of energy efficient, high density, fast superconducting ANNs for cognitive receiving systems.

It was shown that a Josephson structure (e.g., a bi-SQUID or a SQIF) transfer function can be precisely designed by combining basic SQUID cells with known characteristics [25-28]. In this letter we describe designs for superconducting neurons with sigmoid- and Gaussian-like shapes for the activation functions inspired by these works. Being based on a simple parametric quantron cell, our neurons allow an ANN to be operated in an extremely energy efficient, adiabatic regime. The neurons are proposed for perceptron and radial basic function (RBF) ANNs, which solve the signal recognition and identification problems, respectively. The complexity of these networks could be increased with further development of nanotechnology [29] with the implementation of nanoscale Josephson junctions (e.g., on the basis of variable thickness bridges [30]). Finally, comparison of the probability of error curves for RBF ANNs based on the proposed neuron with those based on an ideal neuron with a Gaussian activation function is presented.

Sigma-cell: the basic element for a multilayer perceptron

A multilayer perceptron (MLP) is a feed-forward ANN model that maps input data onto a set of outputs [1]. An MLP consists of multiple layers of nodes in a directed graph with each layer fully connected to the next one. Each node is treated as a neuron whose activation function usually has a sigmoid-like shape.

We start our pursuit of the MLP artificial neuron with an analysis of a simple quantron (or single-junction superconducting interferometer, Figure 1a) transfer function. This function links the applied magnetic flux, ΦX, with current flowing (Iout) in a superconducting loop of inductance, Lq. Hereafter, we use the normalization of inductance, lq = 2πIcLq/Φ0 (where Φ0 is the magnetic flux quantum, Ic is the junction’s critical current), magnetic flux, φX = 2πΦX/Φ0, and current, iout = Iout /Ic.

![[2190-4286-7-130-1]](/bjnano/content/figures/2190-4286-7-130-1.png?scale=2.0&max-width=1024&background=FFFFFF)

Figure 1: (a) Principle scheme for a potential quantron. (b) Quantron flux-to-current transfer function for different values of the normalized ring inductance lq; insets show the harmonic amplitudes for selected curves.

Figure 1: (a) Principle scheme for a potential quantron. (b) Quantron flux-to-current transfer function for d...

The phase balance for the quantron loop and the relationship between the current through the Josephson junction and its phase, φ, are as follows:

One can represent output current as a parametric function [25] and then plot the nonlinear flux-to-current characteristic, as shown in Figure 1b. Note that the resulting transfer function is non-sinusoidal and amplitudes of its higher harmonics increase with increasing inductance, lq.

A sigmoid function is most suitable mathematically for the solution of the image or pattern recognition problems by means of MLP. One can provide this form of a flux-to-current transformation by combining the transfer function of the quantron with a linear dependence, which is provided by a simple superconducting ring. The principal scheme of the resulting sigma cell (or s-cell) as a part of a three-layer perceptron is presented in Figure 2. The magnetic flux is induced by the excitation current IX in the control line, which is magnetically coupled to the quantron and the linear cell through mutual inductances k1 and k2, respectively. We shall assume for simplicity that the quantron contains inductances lq and l/2, the superconducting ring – lq, l/2, la. The current iT (see Figure 2) allows the operating point to be set.

![[2190-4286-7-130-2]](/bjnano/content/figures/2190-4286-7-130-2.png?scale=2.0&max-width=1024&background=FFFFFF)

Figure 2: Principle scheme of a three-layer perceptron conceived as layers of connected nodes (with different weights in the general case) in a directed graph, and the suggested sigma cell with sigmoid-like flux-to-current transformation on the basis of a quantron and superconducting ring. Intersecting connections can be realized in the "magnetic domain" via an inductance lq using a technique described in [18].

Figure 2: Principle scheme of a three-layer perceptron conceived as layers of connected nodes (with different...

For analysis of the proposed cell flux-to-current transformation, one can write equations similar to Equation 1 and Equation 2. Here the phase balance and Kirchhoff's rule for the circuit considered give us the following expressions:

Note that the expression for the output signal (Equation 4) contains a term with linear dependence on the input current iX. The resulting sigma cell flux-to-current transfer function is presented in Figure 3. An increase in the normalized inductance of the superconducting ring (at fixed quantron parameters) reduces the slope of the overall characteristic. The same effect can be obtained by decreasing the coupling of this ring with the control line. The figure shows that the overall slope practically disappears at la = 1, l = 0.6 and k1 = k2 = 0.1, which therefore is a preferred set of parameters for the physical implementation of the MLP neuron. Consistency of the obtained flux-to-current transformation with the sigmoid function can be tuned further by variation of the quantron inductance lq.

![[2190-4286-7-130-3]](/bjnano/content/figures/2190-4286-7-130-3.png?scale=2.0&max-width=1024&background=FFFFFF)

Figure 3: (a), (b) Flux-to-current characteristics of the sigma cell for different parameters of the superconducting ring la and α = k1/k2 at l = 0.6. (c) Sigma cell flux-to-current transfer function for a set of values of the inductance lq. The inset shows the amplitude of the transfer function and its standard deviation from the sigmoid function (×100).

Figure 3: (a), (b) Flux-to-current characteristics of the sigma cell for different parameters of the supercon...

Gauss cell: the basic element for a probabilistic network

The identification of different sources is a difficult problem in cognitive signal processing. MLP is the most frequently used for its solution. However, this type of neural network does not provide a probabilistic interpretation of the classification results and requires rather lengthy training [5,6]. An RBF-based network or a probabilistic network lack these disadvantages. Here, a decision requires the estimation of the probability density function for each class of radio signal sources, and so the basic cell has to provide a Gaussian-like transfer function.

The principal scheme of the proposed Gauss cell (or G-cell) is presented in Figure 4. Its design can be qualitatively understood as the connection of two s-cells in order to obtain a bell-shaped transfer function from two sigmoid functions. Note that the resulting scheme is quite analogous to the above mentioned QFP. The cell is a two-junction interferometer with a total normalized inductance l, composed of two Josephson junctions J1 and J2 shunted by inductance lq. Once again, the excitation current IX is applied to a control line, which is magnetically coupled to the symmetrical arms of the interferometer.

![[2190-4286-7-130-4]](/bjnano/content/figures/2190-4286-7-130-4.png?scale=2.0&max-width=1024&background=FFFFFF)

Figure 4: Principle scheme of an RBF neural network (where the output is a linear combination of radial basis functions of input x and neuron parameters) and suggested Gauss cell with Gaussian-like flux-to-current transformation.

Figure 4: Principle scheme of an RBF neural network (where the output is a linear combination of radial basis...

One can write the equations for a Gauss cell by analogy with Equation 1 and Equation 2 in terms of the sum and difference phases, θ = (φ2 + φ1)/2; ψ = (φ1 − φ2)/2:

Here, the term with a linear dependence on the input current cancels in the expression for the output signal (Equation 7). This results in a G-cell flux-to-current transfer function as presented in Figure 5. It is seen that an increase of the normalized inductances l and lq leads to an increase of the transfer function amplitude and its standard deviation from a Gaussian function.

![[2190-4286-7-130-5]](/bjnano/content/figures/2190-4286-7-130-5.png?scale=2.0&max-width=1024&background=FFFFFF)

Figure 5: (a), (b) Gauss cell flux-to-current transfer function for different values of the interferometer and shunt inductances l and lq. Insets show the function amplitude and its standard deviation from a Gaussian function.

Figure 5: (a), (b) Gauss cell flux-to-current transfer function for different values of the interferometer an...

The simulation results for the noise immunity characteristics of an RBF ANN are shown in Figure 6. Here, the results of the G-cell implementation (with l = 1 and lq = 0.5 taken in order to get a relatively large output signal) are compared with the ideal case of a true Gaussian activation function of cells in a hidden layer of the probabilistic network.

![[2190-4286-7-130-6]](/bjnano/content/figures/2190-4286-7-130-6.png?scale=2.0&max-width=1024&background=FFFFFF)

Figure 6: Simulation results for the noise immunity characteristics of a G-cell based RBF ANN with (solid line) and without (dashed line) input data normalization. Dots represent the simulation with an ideal Gaussian activation function.

Figure 6: Simulation results for the noise immunity characteristics of a G-cell based RBF ANN with (solid lin...

We should note that the obtained sigmoid-like and Gaussian-like transfer functions are periodic due to the quantization of magnetic flux in superconducting interferometers. This limits the ANN dynamic range. We patch this issue by input data normalization.

In conclusion, we have proposed two superconducting neurons for energy efficient ANNs capable of operation in the adiabatic regime. These ANNs are the most frequently used perceptron and probabilistic RBF network. Consideration of the networks organization and their interface with well-developed adiabatic superconductor logic seems straightforward and will be performed in our upcoming papers.

References

-

Rosenblatt, F. X. Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms; Spartan Books: Washington, DC, U.S.A., 1961.

Return to citation in text: [1] [2] -

Yan, Q.; Li, M.; Chen, F.; Jiang, T.; Lou, W.; Hou, T. Y.; Lu, C.-T. IEEE Trans. Wireless Commun. 2014, 13, 5893. doi:10.1109/TWC.2014.2339218

Return to citation in text: [1] -

Munjuluri, S.; Garimella, R. M. Procedia Comput. Sci. 2015, 46, 1156. doi:10.1016/j.procs.2015.01.028

Return to citation in text: [1] -

Farooqi, M. Z.; Tabassum, S. M.; Rehmani, M. H.; Saleem, Y. J. Network Comput. Appl. 2014, 46, 166–181. doi:10.1016/j.jnca.2014.09.002

Return to citation in text: [1] -

Adjemov, S. S.; Klenov, N. V.; Tereshonok, M. V.; Chirov, D. S. Moscow Univ. Phys. Bull. (Engl. Transl.) 2015, 70, 448–456. doi:10.3103/S0027134915060028

Return to citation in text: [1] [2] -

Adjemov, S. S.; Klenov, N. V.; Tereshonok, M. V.; Chirov, D. S. Program. Comput. Software 2016, 42, 121–128. doi:10.1134/S0361768816030026

Return to citation in text: [1] [2] -

Nishijima, S.; Eckroad, S.; Marian, A.; Choi, K.; Kim, W. S.; Terai, M.; Deng, Z.; Zheng, J.; Wang, J.; Umemoto, K.; Du, J.; Febvre, P.; Keenan, S.; Mukhanov, O.; Cooley, L.; Foley, C.; Hassenzahl, W.; Izumi, M. Supercond. Sci. Technol. 2013, 26, 113001. doi:10.1088/0953-2048/26/11/113001

Return to citation in text: [1] -

Mukhanov, O. A.; Kirichenko, D.; Vernik, I. V.; Filippov, T. V.; Kirichenko, A.; Webber, R.; Dotsenko, V.; Talalaevskii, A.; Tang, J. C.; Sahu, A.; Shevchenko, P.; Miller, R.; Kaplan, S. B.; Sarwana, S.; Gupta, D. IEICE Trans. Electron. 2008, E91, 306–317. doi:10.1093/ietele/e91-c.3.306

Return to citation in text: [1] [2] -

Soloviev, I. I.; Kornev, V. K.; Sharafiev, A. V.; Klenov, N. V.; Mukhanov, O. A. IEEE Trans. Appl. Supercond. 2013, 23, 1800405. doi:10.1109/TASC.2012.2232691

Return to citation in text: [1] -

Spietz, L.; Irwin, K.; Aumentado, J. Appl. Phys. Lett. 2009, 95, 092505. doi:10.1063/1.3220061

Return to citation in text: [1] -

Zhang, D.; Trepanier, M.; Mukhanov, O.; Anlage, S. M. Phys. Rev. X 2015, 5, 041045. doi:10.1103/PhysRevX.5.041045

Return to citation in text: [1] -

Lanting, T.; Przybysz, A. J.; Smirnov, A. Yu.; Spedalieri, F. M.; Amin, M. H.; Berkley, A. J.; Harris, R.; Altomare, F.; Boixo, S.; Bunyk, P.; Dickson, N.; Enderud, C.; Hilton, J. P.; Hoskinson, E.; Johnson, M. W.; Ladizinsky, E.; Ladizinsky, N.; Neufeld, R.; Oh, T.; Perminov, I.; Rich, C.; Thom, M. C.; Tolkacheva, E.; Uchaikin, S.; Wilson, A. B.; Rose, G. Phys. Rev. X 2014, 4, 021041. doi:10.1103/PhysRevX.4.021041

Return to citation in text: [1] -

Crotty, P.; Schult, D.; Segall, K. Phys. Rev. E 2010, 82, 011914. doi:10.1103/PhysRevE.82.011914

Return to citation in text: [1] -

Harada, Y.; Goto, E. IEEE Trans. Magn. 1991, 27, 2863–2866. doi:10.1109/20.133806

Return to citation in text: [1] -

Rippert, E. D.; Lomatch, S. IEEE Trans. Appl. Supercond. 1997, 7, 3442–3445. doi:10.1109/77.622126

Return to citation in text: [1] -

Yamanashi, Y.; Umeda, K.; Yoshikawa, N. IEEE Trans. Appl. Supercond. 2013, 23, 1701004. doi:10.1109/TASC.2012.2228531

Return to citation in text: [1] -

Onomi, T.; Nakajima, K. J. Phys.: Conf. Ser. 2014, 507, 042029. doi:10.1088/1742-6596/507/4/042029

Return to citation in text: [1] -

Chiarello, F.; Carelli, P.; Castellano, M. G.; Torrioli, G. Supercond. Sci. Technol. 2013, 26, 125009. doi:10.1088/0953-2048/26/12/125009

Return to citation in text: [1] [2] [3] -

Likharev, K. IEEE Trans. Magn. 1977, 13, 242–244. doi:10.1109/TMAG.1977.1059351

Return to citation in text: [1] -

Hosoya, M.; Hioe, W.; Casas, J.; Kamikawai, R.; Harada, Y.; Wada, Y.; Nakane, H.; Suda, R.; Goto, E. IEEE Trans. Appl. Supercond. 1991, 1, 77–89. doi:10.1109/77.84613

Return to citation in text: [1] -

Takeuchi, N.; Ozawa, D.; Yamanashi, Y.; Yoshikawa, N. Supercond. Sci. Technol. 2013, 26, 035010. doi:10.1088/0953-2048/26/3/035010

Return to citation in text: [1] -

Takeuchi, N.; Yamanashi, Y.; Yoshikawa, N. Appl. Phys. Lett. 2013, 102, 052602. doi:10.1063/1.4790276

Return to citation in text: [1] -

Takeuchi, N.; Yamanashi, Y.; Yoshikawa, N. Supercond. Sci. Technol. 2015, 28, 015003. doi:10.1088/0953-2048/28/1/015003

Return to citation in text: [1] -

Xu, Q.; Yamanashi, Y.; Ayala, C. L.; Takeuchi, N.; Ortlepp, T.; Yoshikawa, N. Design of an Extremely Energy-Efficient Hardware Algorithm Using Adiabatic Superconductor Logic. In 15th International Superconductive Electronics Conference (ISEC), July 6–9, 2015; IEEE Publishing: Hoboken, NJ, U.S.A., 2015. doi:10.1109/ISEC.2015.7383446

Return to citation in text: [1] -

Kornev, V. K.; Soloviev, I. I.; Klenov, N. V.; Mukhanov, O. A. Supercond. Sci. Technol. 2009, 22, 114011. doi:10.1088/0953-2048/22/11/114011

Return to citation in text: [1] [2] -

Soloviev, I. I.; Klenov, N. V.; Schegolev, A. E.; Bakurskiy, S. V.; Kupriyanov, M. Yu. Supercond. Sci. Technol. 2016, 29, 094005. doi:10.1088/0953-2048/29/9/094005

Return to citation in text: [1] -

Kornev, V. K.; Soloviev, I. I.; Klenov, N. V.; Mukhanov, O. A. IEEE Trans. Appl. Supercond. 2007, 17, 569–572. doi:10.1109/TASC.2007.898119

Return to citation in text: [1] -

Kornev, V. K.; Sharafiev, A. V.; Soloviev, I. I.; Kolotinskiy, N. V.; Scripka, V. A.; Mukhanov, O. A. IEEE Trans. Appl. Supercond. 2014, 24, 1800606. doi:10.1109/TASC.2014.2318291

Return to citation in text: [1] -

Huth, M.; Porrati, F.; Schwalb, C.; Winhold, M.; Sachser, R.; Dukic, M.; Adams, J.; Fantner, G. Beilstein J. Nanotechnol. 2012, 3, 597–619. doi:10.3762/bjnano.3.70

Return to citation in text: [1] -

Golikova, T. E.; Hübler, F.; Beckmann, D.; Klenov, N. V.; Bakurskiy, S. V.; Kupriyanov, M. Yu.; Batov, I. E.; Ryazanov, V. V. JETP Lett. 2013, 96, 668–673. doi:10.1134/S0021364012220043

Return to citation in text: [1]

| 5. | Adjemov, S. S.; Klenov, N. V.; Tereshonok, M. V.; Chirov, D. S. Moscow Univ. Phys. Bull. (Engl. Transl.) 2015, 70, 448–456. doi:10.3103/S0027134915060028 |

| 6. | Adjemov, S. S.; Klenov, N. V.; Tereshonok, M. V.; Chirov, D. S. Program. Comput. Software 2016, 42, 121–128. doi:10.1134/S0361768816030026 |

| 25. | Kornev, V. K.; Soloviev, I. I.; Klenov, N. V.; Mukhanov, O. A. Supercond. Sci. Technol. 2009, 22, 114011. doi:10.1088/0953-2048/22/11/114011 |

| 18. | Chiarello, F.; Carelli, P.; Castellano, M. G.; Torrioli, G. Supercond. Sci. Technol. 2013, 26, 125009. doi:10.1088/0953-2048/26/12/125009 |

| 1. | Rosenblatt, F. X. Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms; Spartan Books: Washington, DC, U.S.A., 1961. |

| 8. | Mukhanov, O. A.; Kirichenko, D.; Vernik, I. V.; Filippov, T. V.; Kirichenko, A.; Webber, R.; Dotsenko, V.; Talalaevskii, A.; Tang, J. C.; Sahu, A.; Shevchenko, P.; Miller, R.; Kaplan, S. B.; Sarwana, S.; Gupta, D. IEICE Trans. Electron. 2008, E91, 306–317. doi:10.1093/ietele/e91-c.3.306 |

| 9. | Soloviev, I. I.; Kornev, V. K.; Sharafiev, A. V.; Klenov, N. V.; Mukhanov, O. A. IEEE Trans. Appl. Supercond. 2013, 23, 1800405. doi:10.1109/TASC.2012.2232691 |

| 10. | Spietz, L.; Irwin, K.; Aumentado, J. Appl. Phys. Lett. 2009, 95, 092505. doi:10.1063/1.3220061 |

| 11. | Zhang, D.; Trepanier, M.; Mukhanov, O.; Anlage, S. M. Phys. Rev. X 2015, 5, 041045. doi:10.1103/PhysRevX.5.041045 |

| 30. | Golikova, T. E.; Hübler, F.; Beckmann, D.; Klenov, N. V.; Bakurskiy, S. V.; Kupriyanov, M. Yu.; Batov, I. E.; Ryazanov, V. V. JETP Lett. 2013, 96, 668–673. doi:10.1134/S0021364012220043 |

| 8. | Mukhanov, O. A.; Kirichenko, D.; Vernik, I. V.; Filippov, T. V.; Kirichenko, A.; Webber, R.; Dotsenko, V.; Talalaevskii, A.; Tang, J. C.; Sahu, A.; Shevchenko, P.; Miller, R.; Kaplan, S. B.; Sarwana, S.; Gupta, D. IEICE Trans. Electron. 2008, E91, 306–317. doi:10.1093/ietele/e91-c.3.306 |

| 1. | Rosenblatt, F. X. Principles of Neurodynamics: Perceptrons and the Theory of Brain Mechanisms; Spartan Books: Washington, DC, U.S.A., 1961. |

| 7. | Nishijima, S.; Eckroad, S.; Marian, A.; Choi, K.; Kim, W. S.; Terai, M.; Deng, Z.; Zheng, J.; Wang, J.; Umemoto, K.; Du, J.; Febvre, P.; Keenan, S.; Mukhanov, O.; Cooley, L.; Foley, C.; Hassenzahl, W.; Izumi, M. Supercond. Sci. Technol. 2013, 26, 113001. doi:10.1088/0953-2048/26/11/113001 |

| 25. | Kornev, V. K.; Soloviev, I. I.; Klenov, N. V.; Mukhanov, O. A. Supercond. Sci. Technol. 2009, 22, 114011. doi:10.1088/0953-2048/22/11/114011 |

| 26. | Soloviev, I. I.; Klenov, N. V.; Schegolev, A. E.; Bakurskiy, S. V.; Kupriyanov, M. Yu. Supercond. Sci. Technol. 2016, 29, 094005. doi:10.1088/0953-2048/29/9/094005 |

| 27. | Kornev, V. K.; Soloviev, I. I.; Klenov, N. V.; Mukhanov, O. A. IEEE Trans. Appl. Supercond. 2007, 17, 569–572. doi:10.1109/TASC.2007.898119 |

| 28. | Kornev, V. K.; Sharafiev, A. V.; Soloviev, I. I.; Kolotinskiy, N. V.; Scripka, V. A.; Mukhanov, O. A. IEEE Trans. Appl. Supercond. 2014, 24, 1800606. doi:10.1109/TASC.2014.2318291 |

| 2. | Yan, Q.; Li, M.; Chen, F.; Jiang, T.; Lou, W.; Hou, T. Y.; Lu, C.-T. IEEE Trans. Wireless Commun. 2014, 13, 5893. doi:10.1109/TWC.2014.2339218 |

| 3. | Munjuluri, S.; Garimella, R. M. Procedia Comput. Sci. 2015, 46, 1156. doi:10.1016/j.procs.2015.01.028 |

| 4. | Farooqi, M. Z.; Tabassum, S. M.; Rehmani, M. H.; Saleem, Y. J. Network Comput. Appl. 2014, 46, 166–181. doi:10.1016/j.jnca.2014.09.002 |

| 5. | Adjemov, S. S.; Klenov, N. V.; Tereshonok, M. V.; Chirov, D. S. Moscow Univ. Phys. Bull. (Engl. Transl.) 2015, 70, 448–456. doi:10.3103/S0027134915060028 |

| 6. | Adjemov, S. S.; Klenov, N. V.; Tereshonok, M. V.; Chirov, D. S. Program. Comput. Software 2016, 42, 121–128. doi:10.1134/S0361768816030026 |

| 29. | Huth, M.; Porrati, F.; Schwalb, C.; Winhold, M.; Sachser, R.; Dukic, M.; Adams, J.; Fantner, G. Beilstein J. Nanotechnol. 2012, 3, 597–619. doi:10.3762/bjnano.3.70 |

| 18. | Chiarello, F.; Carelli, P.; Castellano, M. G.; Torrioli, G. Supercond. Sci. Technol. 2013, 26, 125009. doi:10.1088/0953-2048/26/12/125009 |

| 21. | Takeuchi, N.; Ozawa, D.; Yamanashi, Y.; Yoshikawa, N. Supercond. Sci. Technol. 2013, 26, 035010. doi:10.1088/0953-2048/26/3/035010 |

| 22. | Takeuchi, N.; Yamanashi, Y.; Yoshikawa, N. Appl. Phys. Lett. 2013, 102, 052602. doi:10.1063/1.4790276 |

| 23. | Takeuchi, N.; Yamanashi, Y.; Yoshikawa, N. Supercond. Sci. Technol. 2015, 28, 015003. doi:10.1088/0953-2048/28/1/015003 |

| 24. | Xu, Q.; Yamanashi, Y.; Ayala, C. L.; Takeuchi, N.; Ortlepp, T.; Yoshikawa, N. Design of an Extremely Energy-Efficient Hardware Algorithm Using Adiabatic Superconductor Logic. In 15th International Superconductive Electronics Conference (ISEC), July 6–9, 2015; IEEE Publishing: Hoboken, NJ, U.S.A., 2015. doi:10.1109/ISEC.2015.7383446 |

| 16. | Yamanashi, Y.; Umeda, K.; Yoshikawa, N. IEEE Trans. Appl. Supercond. 2013, 23, 1701004. doi:10.1109/TASC.2012.2228531 |

| 17. | Onomi, T.; Nakajima, K. J. Phys.: Conf. Ser. 2014, 507, 042029. doi:10.1088/1742-6596/507/4/042029 |

| 18. | Chiarello, F.; Carelli, P.; Castellano, M. G.; Torrioli, G. Supercond. Sci. Technol. 2013, 26, 125009. doi:10.1088/0953-2048/26/12/125009 |

| 14. | Harada, Y.; Goto, E. IEEE Trans. Magn. 1991, 27, 2863–2866. doi:10.1109/20.133806 |

| 15. | Rippert, E. D.; Lomatch, S. IEEE Trans. Appl. Supercond. 1997, 7, 3442–3445. doi:10.1109/77.622126 |

| 12. | Lanting, T.; Przybysz, A. J.; Smirnov, A. Yu.; Spedalieri, F. M.; Amin, M. H.; Berkley, A. J.; Harris, R.; Altomare, F.; Boixo, S.; Bunyk, P.; Dickson, N.; Enderud, C.; Hilton, J. P.; Hoskinson, E.; Johnson, M. W.; Ladizinsky, E.; Ladizinsky, N.; Neufeld, R.; Oh, T.; Perminov, I.; Rich, C.; Thom, M. C.; Tolkacheva, E.; Uchaikin, S.; Wilson, A. B.; Rose, G. Phys. Rev. X 2014, 4, 021041. doi:10.1103/PhysRevX.4.021041 |

| 13. | Crotty, P.; Schult, D.; Segall, K. Phys. Rev. E 2010, 82, 011914. doi:10.1103/PhysRevE.82.011914 |

| 19. | Likharev, K. IEEE Trans. Magn. 1977, 13, 242–244. doi:10.1109/TMAG.1977.1059351 |

| 20. | Hosoya, M.; Hioe, W.; Casas, J.; Kamikawai, R.; Harada, Y.; Wada, Y.; Nakane, H.; Suda, R.; Goto, E. IEEE Trans. Appl. Supercond. 1991, 1, 77–89. doi:10.1109/77.84613 |

© 2016 Schegolev et al.; licensee Beilstein-Institut.

This is an Open Access article under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/4.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

The license is subject to the Beilstein Journal of Nanotechnology terms and conditions: (http://www.beilstein-journals.org/bjnano)